Originally posted on: techsabado.com/2025/11/08/burning-chrome-minidisc-an-almost-forgotten-favorite/

In the crowded museum of audio history, the MiniDisc stands like a stubborn exhibit that refuses to gather dust. Launched in 1992, Sony’s answer to the compact cassette promised a future of sleek portability and digital convenience. The disc, enclosed in a protective plastic shell, seemed ahead of its time. It resisted scratches and dust, held music encoded through Sony’s ATRAC compression system, and could be re-recorded endlessly without degradation. For a while, it looked like the perfect format to bridge the gap between the tactile charm of analog and the clean efficiency of digital audio.

Sony envisioned the MiniDisc as the next step after the cassette Walkman, a product that had already defined a generation of music listening habits. Unlike CDs, which were fragile and prone to skipping in portable players, MiniDiscs had built-in buffering that made them shock-resistant. They could be slipped into pockets or tossed into backpacks without fear of scratches. A student on a crowded Tokyo train could carry hours of music without lugging around a tower of discs. A field journalist could record interviews on a device smaller than a paperback novel, then erase and reuse the same disc indefinitely.

The rise and the stall

The MiniDisc found enthusiastic acceptance in Japan, where Sony’s influence was strongest, and in parts of Europe where consumers valued its mix of portability and durability. For journalists, it became a reliable field recorder. For musicians, it offered a new way to capture demos without the fragility of DAT tapes or the noise of cassettes. But in the United States, one of the largest consumer of electronic products, the format never found its footing. Compact discs were already entrenched, and by the late 1990s, CD burners and recordable CD-Rs undercut one of the MiniDisc’s main advantages: the ability to record easily at home.

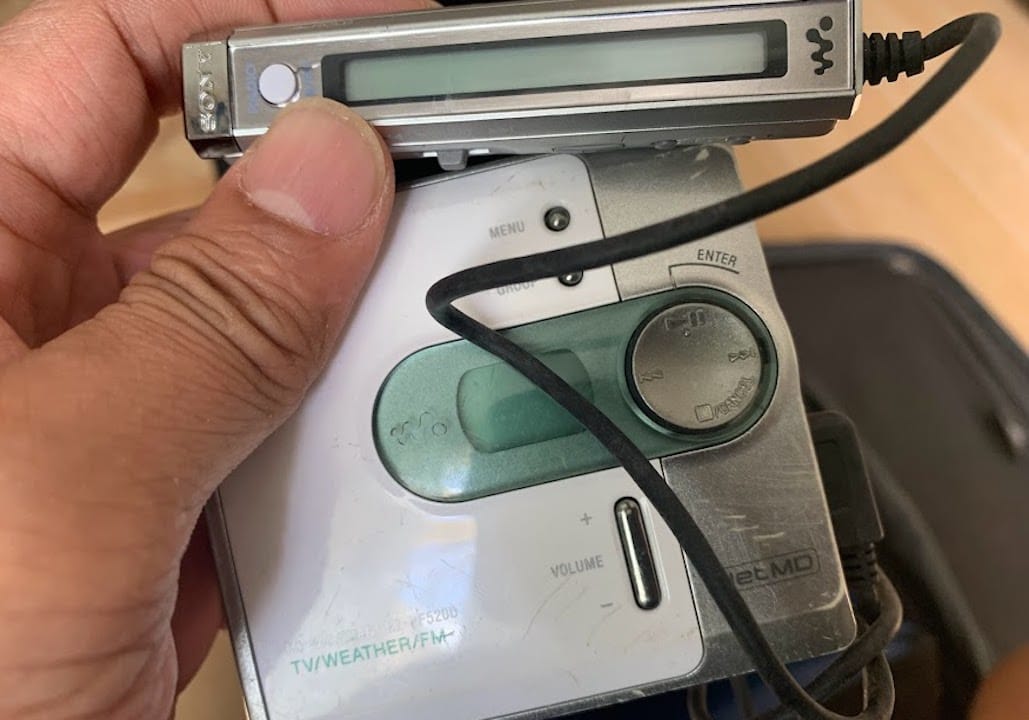

Sony kept iterating, introducing features that tried to extend the MiniDisc’s lifespan. In 2000, MiniDisc Long Play allowed discs to store more hours of music through tighter compression. NetMD followed, promising a digital bridge between computers and MiniDisc units, although Sony’s clunky, restrictive software frustrated users who had grown accustomed to the free flow of MP3s. Then came Hi-MD in 2004, which boosted storage to a full gigabyte and, more importantly, allowed recording in uncompressed PCM audio, finally giving purists the CD-quality sound they had been demanding.

But technology waits for no one. By the time Hi-MD arrived, the iPod from Apple had already begun reshaping how the world consumed music. The promise of carrying an entire library in your pocket, with no moving parts or blank media to purchase, was irresistible. Streaming services would later finish the job, rendering physical music formats optional at best. Sony officially stopped producing MiniDisc players in 2011, and in early 2025, the company announced it would cease manufacturing recordable discs altogether.

The cult of the MiniDisc

Yet MiniDisc remains alive, not in the mainstream, but in the cracks of modern audio culture where nostalgia, ritual, and creative curiosity thrive. Among collectors and music obsessives, it has earned a place beside vinyl records and cassettes as a format that represents more than just playback—it represents an experience.

To slide a MiniDisc into a Walkman or a deck is to engage with music deliberately. Each disc can be labeled, tracks renamed or reordered, and recordings trimmed or spliced directly on the device. Unlike the infinite scroll of Spotify, the MiniDisc imposes a kind of discipline. You think about what you want to record, how you want to name it, and which takes are worth keeping. It forces curation in a world drowning in algorithmic abundance.

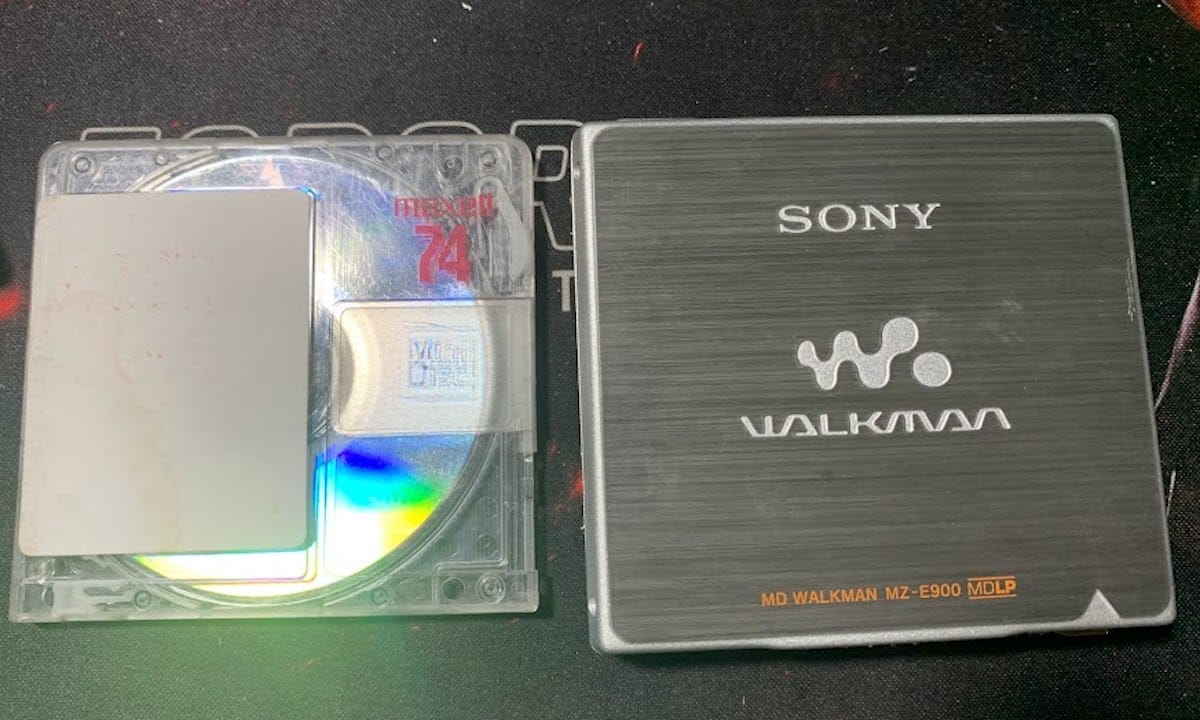

Communities of enthusiasts still trade blank discs online, repair aging devices, and even release new albums in MiniDisc format. Independent labels, especially in experimental and electronic music, issue limited-edition runs on MD as a kind of boutique collectible. The appeal lies not just in the sound, but in the tangible object: a palm-sized cartridge that embodies care, intention, and permanence.

The MiniDisc’s durability and portability are still unmatched. The cartridge design makes it practically immune to scratches, a problem that has plagued CDs since their inception. The anti-skip buffer makes it reliable for live performance playback, field recording, or simply walking with music in your pocket. The ability to re-record over the same disc thousands of times without signal degradation gave it a practical edge over both cassettes and CDs.

In the studio, Hi-MD units such as Sony’s MZ-RH1 became indispensable tools for musicians who needed portable PCM-quality recording. Producers and sound designers used MDs for capturing rehearsals, field sounds, and demo takes. Tascam and TEAC released studio decks that integrated MiniDisc recording with CD functionality, making them reliable workhorses in project studios. The format’s editing features—splitting, combining, and erasing tracks without touching a computer—remain a kind of quiet magic that most modern gear still doesn’t replicate in such a portable form.

But the downsides have always been part of the story. ATRAC compression, while innovative, left artifacts that some listeners never forgave, especially in long-play modes that sacrificed fidelity for capacity. Sony’s decision to lock users into proprietary software during the NetMD era alienated many at the exact moment when MP3 culture was exploding. Today, the scarcity of blank media and the rising cost of secondhand players make MiniDisc a difficult habit to maintain. Devices break, batteries degrade, and with Sony pulling the plug on new disc production, the supply chain is hanging by a thread.

The collector’s market

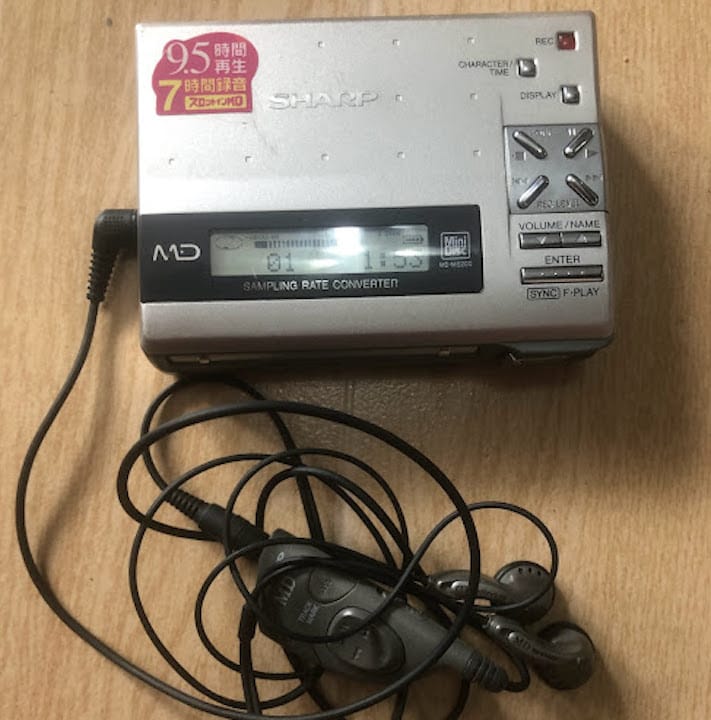

Despite these challenges, demand for top-tier units has surged on the resale market. Sony’s MZ-RH1, the final flagship portable recorder, is treated like holy relic among enthusiasts. Earlier models from Sharp and Panasonic are also sought after for their design quirks and unique sonic qualities. Studio decks from Tascam and Denon still find homes in project studios, where engineers prize them for their reliability and integration with analog setups.

Blank discs themselves have become collectible items. Early translucent Sony designs, special editions with metallic shells, and even no-name brands are traded online like rare vinyl pressings. The scarcity has created a market where enthusiasts willingly pay premiums to continue feeding their players, underscoring just how strong the devotion to the format remains.

For many, the MiniDisc’s revival is about more than sound quality. It represents an intentional way of listening and recording in an age of endless convenience. Much like vinyl, which reemerged from obsolescence to become a billion-dollar industry again, MiniDisc appeals to those who crave tangibility. It asks you to slow down, to engage with music as a physical artifact rather than a fleeting stream.

In studios, the format still has creative value. Some producers use ATRAC’s compression as a sonic filter, giving recordings a distinct character. Field recordists appreciate the ruggedness of Hi-MD units in situations where laptops or flash recorders feel fragile. Even if not the main recording medium, MiniDisc can serve as a dependable backup or as a creative tool in hybrid digital-analog workflows.

The question now is not whether MiniDisc will make a mainstream comeback—it won’t—but whether it can persist as a niche format sustained by communities of enthusiasts. As Sony phases out blank media, the future depends on the dedication of users who continue to refurbish machines, trade supplies, and advocate for the format’s unique virtues. Independent labels may keep pressing limited MD releases for collectors. Hobbyists might hack together new software tools to improve PC integration or even experiment with manufacturing compatible blank discs.

What seems clear is that MiniDisc has crossed into the territory of cultural artifact. It belongs to the same family as vinyl and cassettes: obsolete by commercial standards but treasured by those who see value in its tactile, deliberate rituals. For musicians and listeners who still use it daily, it remains not just a format but a philosophy—a refusal to surrender entirely to the frictionless convenience of the cloud.

The irony is that MiniDisc, once dismissed as a failed format, now thrives precisely because it failed. In a music industry dominated by the intangible, its survival depends on being physical, scarce, and resistant to the stream. Each disc, each label scrawled in pen, each session captured on ATRAC or PCM becomes part of a story that the format’s devotees are determined to keep telling.

In the end, the MiniDisc may never reclaim its place in the mainstream, but that was never the point. Its value lies in its stubbornness, its resilience, and its ability to inspire loyalty decades after its commercial death. For those of us still sliding in discs, pressing record, and watching the tiny display flicker with life, the format is not dead. It is simply underground, and perhaps that’s where it was always meant to be.

Originally posted on: techsabado.com/2025/11/08/burning-chrome-minidisc-an-almost-forgotten-favorite/